This AI Startup Spent $12M on a Domain Name… and Now Went Bankrupt

Why it failed, what you can learn, hidden mechanics of trust in AI systems, and a step-by-step operating plan you can use to audit onboarding, identify trust breaks, and design reliable AI workflows

The $12M Domain That Couldn’t Save an AI Product

Recently, I came across a story that reveals something deeply important about how AI products actually succeed or fail.

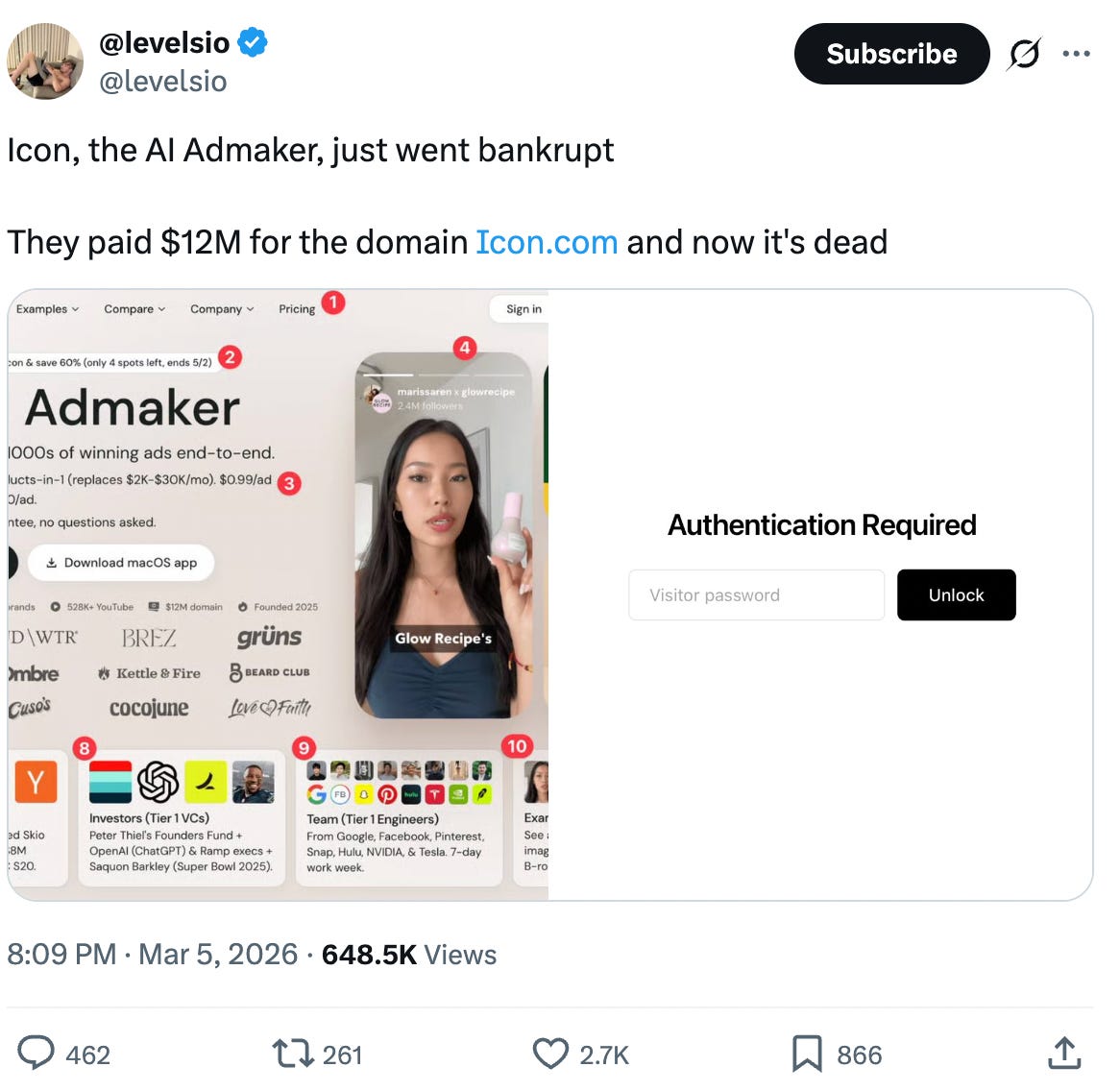

An AI ad-making startup called Icon reportedly went bankrupt.

This is the same company that became widely known in the tech world after spending $12 million to acquire the domain name “icon.”

Yes, twelve million dollars for a domain name.

Naturally, the internet did what it always does when a story like this appears: people joked about the decision, shared memes about the domain purchase, and treated the whole situation as another cautionary tale about startup vanity spending.

But focusing on the domain purchase misses the deeper and far more important lesson hiding underneath the story.

The real takeaway is not about domains.

The real takeaway is about how AI products are judged by users, and why so many AI startups collapse even after generating massive early attention.

Because in the world of AI products today…

Marketing can get you attention…

Distribution can get you trials…

Hype can get you headlines…

… but none of those things can save a product that users stop trusting after the very first experience.

If you are building an AI product today - Whether you are a product manager, founder, engineer, or product leader, this story is not just startup gossip.

It is a warning signal about how the AI product market actually works.

The wrong takeaway: “Don’t spend money on branding”

Before we go deeper, it’s important to address the obvious misinterpretation that many people immediately jump to.

The lesson here is not that branding is useless or that companies should never invest in positioning, marketing, or distribution.

Brand matters.

Positioning matters.

Great domains matter.

Landing pages matter.

Creator partnerships matter.

All of these things can dramatically accelerate product growth when they are working in alignment with a strong underlying product experience.

But those things only work when they are amplifying something real.

If the core product experience fails to deliver on the promise that marketing creates, then great marketing does not become an advantage.

Instead, it becomes an accelerant for disappointment.

In other words, great marketing simply brings more users into a product that is not yet ready to earn their trust.

And that dynamic is particularly dangerous in AI.

In traditional SaaS products, users sometimes tolerate friction.

They might forgive clunky UX.

They might return after a mediocre onboarding experience.

They might experiment with the product several times before deciding whether it truly fits their workflow.

But AI products operate under a very different psychological contract.

The moment a user sees hallucinated outputs, unreliable results, inconsistent behavior, or a workflow that feels confusing and unpredictable, trust collapses much faster than it does in traditional software.

And once that trust collapses, users rarely say:

“Maybe I should try this tool five more times.”

Instead, they simply leave.

The real lesson: AI products are judged on credibility, not novelty!

One of the biggest misconceptions that still exists in the AI startup ecosystem is the belief that novelty alone is enough to sustain product adoption.

Many AI teams build as if a magical demo is sufficient to create long-term usage.

They assume that if the product looks impressive in a demo, the real product will naturally find its way into user workflows.

They assume that if the landing page is polished and the product generates impressive outputs occasionally, users will stay patient while the product matures.

They assume that influencer hype or social media buzz will buy them enough time to refine the product later.

This logic may have worked during earlier software waves when users were more tolerant of imperfect tools and more willing to experiment with emerging products.

But it does not work nearly as well in AI.

AI products are not judged simply by whether they can produce something impressive once.

They are judged by a much more demanding question:

Can I trust this enough to incorporate it into my workflow?

Users subconsciously evaluate AI products through questions like:

Can I rely on this output, or do I have to double-check everything?

Does the system behave consistently, or does it produce wildly different results every time?

Does the product understand the context of the problem I am trying to solve?

Does it reduce the amount of work I have to do, or does it introduce new risks?

Would I actually use this again tomorrow?

This is why the core challenge in AI product development is not creating delight.

It is creating credibility.

And credibility is not created by branding.

It is created by product decisions.

It’s a good time to revisit these lessons:

Lesson 1: In AI, the first user experience determines everything

For many AI products, the first user session is effectively the entire game.

The first prompt a user writes.

The first output the system generates.

The first workflow the user attempts to complete.

Those moments determine whether the product feels like leverage, a toy, a liability, or something fundamentally unreliable.

That is why onboarding in AI products must be treated very differently from onboarding in traditional SaaS tools.

In most software categories, onboarding is primarily about helping users discover features and understand the product’s capabilities.

In AI products, onboarding is fundamentally about designing trust.

The first interaction should not maximize the number of features the user sees.

It should maximize the probability that the user experiences a moment where the system clearly demonstrates reliable value.

Unfortunately, many AI products do the opposite.

They place users directly into a blank prompt box with little guidance.

They promise broad capabilities that the system cannot yet consistently deliver.

And they allow the model itself to generate the first impression without any scaffolding or workflow structure.

That approach might look flexible on the surface.

But in practice, it often creates confusion, unreliable outputs, and early disappointment.

The best AI product teams design first-time experiences around carefully chosen use cases where the system performs reliably, the workflow is structured, and the user can quickly see a clear and verifiable result.

In other words, the goal is not to impress the user with possibilities.

The goal is to earn the user’s belief.

Lesson 2: Weak product quality quietly destroys growth economics

Another important lesson hidden inside this story is how deeply product quality influences growth performance in AI companies.

Many teams treat product quality and growth strategy as separate conversations.

But in AI products, the relationship between the two is extremely tight.

If the product experience is unreliable, every dollar spent on acquisition becomes less efficient.

Advertising brings users into the funnel, but those users churn quickly after the first disappointing interaction.

Influencer partnerships generate bursts of curiosity, but that curiosity does not convert into repeat usage.

Viral posts produce traffic spikes, but those spikes fade because the product does not generate enough trust to sustain long-term behavior.

This is why weak AI products can appear healthy for a short period of time.

They may have strong branding, large waitlists, impressive demo videos, and significant social media attention.

But underneath that surface excitement, the underlying economics are deteriorating.

Because the product is failing to convert attention into trust, and trust is what converts usage into retention.

Lesson 3: Capital allocation reveals what companies actually believe

The $12 million domain purchase is symbolic, but the deeper lesson is about capital allocation.

Every company has its own version of this decision.

Maybe it is not a domain name.

Maybe it is investing heavily in launch theatrics before the product is reliable.

Maybe it is hiring large growth teams before the core workflow has stabilized.

Maybe it is prioritizing visual polish and branding while ignoring deeper reliability issues in the system.

Maybe it is scaling distribution before the product has earned user trust.

Every resource allocation decision signals what the company believes actually drives value.

And in the AI market today, one of the easiest ways to fail is to overinvest in appearance while underinvesting in reliability.

The companies that ultimately win often look boring internally before they look impressive externally.

They spend disproportionate time and resources on things like evaluation systems, workflow reliability, latency optimization, model routing decisions, guardrails, and deep user feedback loops.

These investments rarely generate flashy launch announcements.

But they generate something far more important.

Products that users trust enough to use repeatedly.

Lesson 4: Reliability is now a core product responsibility

Another major shift happening in AI product development is the expanding role of the product manager.

In many teams today, hallucinations, inconsistent outputs, latency problems, and unpredictable model behavior are still treated primarily as engineering issues.

But in reality, reliability is a product problem.

Users do not experience your company’s internal structure.

They experience the output.

If the output is inconsistent, the product feels broken.

If the workflow is confusing, the product feels unreliable.

If the system produces unpredictable results, the product feels unsafe.

This means product managers building AI systems must deeply understand the behavior of the models that power their products.

They must understand where hallucinations occur.

They must understand how evaluation frameworks work.

They must understand when generative flexibility is valuable and when strict constraints are necessary.

They must design fallback mechanisms that allow users to recover when outputs fail.

The next generation of AI product leaders will not simply be the people who know how to add AI features.

They will be the people who know how to turn probabilistic model behavior into reliable product experiences.

A simple framework: The AI Trust Stack

If we compress all of these lessons into a single framework for evaluating AI products, it looks something like this:

1. Promise: What are we telling users the product can do?

2. First Proof: What do users experience within the first few minutes of interacting with the system?

3. Repeatability: Does the product produce reliable results consistently enough for users to build confidence?

4. Recoverability: When the system produces weak outputs, can users easily correct or recover from them?

5. Operational Trust: Does the overall system feel stable enough that users are comfortable incorporating it into their daily workflows?

Many AI products perform well on the first step.

Some succeed on the second.

Very few are strong across the entire stack.

And that gap is where most AI startups struggle.

The question every AI product team should ask

Before launching a major marketing campaign or scaling distribution, product teams should ask themselves one simple question:

If this campaign succeeds beyond our expectations, is the product actually ready for the attention that will follow?

Because attention is not the same thing as validation.

Attention simply exposes the product to more users more quickly.

If the product is ready, that attention accelerates growth.

If the product is not ready, that attention accelerates disappointment.

What product teams should actually do next: a deeper operational plan for the next 30 days

If you are building an AI product today, the most dangerous thing you can do after reading a story like this is nod your head, agree with the lesson, and then go back to operating exactly the way you were operating before.

Because the companies that lose in AI are often not the ones that completely misunderstand the market.

They are the ones that understand the lesson intellectually, but fail to translate it into changes in how they design onboarding, how they review output quality, how they prioritize reliability work, how they measure trust, and how they sequence product maturity relative to distribution.

So let’s make this practical.

You must build your 30-day trust rebuild sprint.

The goal of this sprint is simple:

Identify where trust is being lost inside the product, fix the highest-leverage breakpoints, and redesign the team’s product process so trust becomes a first-class product metric rather than an abstract brand outcome.

Here is how I would structure it.

Phase 1: Diagnose where trust is breaking before you try to fix anything

Most teams move too quickly into solution mode.

They assume they already know what is wrong.

They assume users are dropping because the UI needs improvement, or because onboarding is too long, or because the prompt quality is poor, or because the model is not strong enough.

Sometimes those things are true.

But in AI products, the actual trust break is often much more specific and much more behavioral than teams initially assume.

For example, the problem may not be “users do not understand the value.”

The problem may be that the first output looks polished on the surface but contains just enough subtle inaccuracy that the user never fully believes the system again.

Or the problem may not be “our model is weak.”

The problem may be that users have no way to understand when the model is confident versus when it is guessing, which means they experience every output with silent doubt.

So before fixing anything, the team needs to locate the trust break with precision.

Step 1: Run a First-Use Trust Audit

Take the top one to three onboarding paths into your product and review at least 20 first-time user sessions from each path if possible.

Not 20 power users.

Not 20 internal dogfooding sessions.

Not 20 users who already understand AI.

Actual first-time users.

As you watch those sessions, do not review them like a generic activation analyst asking whether users clicked the right buttons.

Review them like a trust analyst.

You are trying to answer a different set of questions:

At what exact moment does the user begin to believe the product may be useful?

At what exact moment does doubt enter the experience?

What output, interaction, delay, or ambiguity creates that doubt?

Does the product produce one strong moment of trust early, or does it force users to “work” to discover value?

When the user receives an output, do they move forward confidently, or do they pause and inspect it with suspicion?

Do they retry quickly because they are experimenting, or because the first result failed them?

When they abandon, what belief are they leaving with?

This is important: in AI products, abandonment is not always caused by friction.

Sometimes abandonment is caused by a subtle collapse in confidence.

The user may continue interacting for a few more clicks after trust breaks, but psychologically, the product already lost.

What to document

Create a shared review doc or spreadsheet with these columns:

Entry path

User intent

First meaningful action

First output shown

Trust-building moment

Trust-breaking moment

Failure type

Recovery attempt

Exit point

Notes on user behavior

Then categorize each trust-breaking moment into one of these buckets:

Expectation mismatch: the product promised more than it delivered

Output unreliability: the result looked wrong, shallow, hallucinated, or unusable

Workflow ambiguity: the user did not know what to do next or how to judge output quality

Verification burden: the product required too much checking, editing, or manual cleanup

Interaction fragility: small input changes produced wildly inconsistent results

System instability: latency, errors, bugs, or inconsistent response behavior

By the end of this audit, your team should not be saying, “Users seem confused.”

They should be saying something much more concrete, like:

“In 13 out of 20 sessions, users got their first output within 90 seconds, but the output was too generic to feel trustworthy, which caused them to retry twice and abandon before reaching the feature where real value appears.”

That level of specificity is what turns a vague product discussion into an actionable product plan.

Phase 2: Identify the highest-leverage trust break and isolate it

Once you have reviewed enough sessions, a pattern will usually emerge.

There will almost always be one trust break that matters more than the rest.

This is the moment where users stop feeling assisted and start feeling burdened.

This is the point where the product transitions from “helpful AI system” to “tool I now have to babysit.”

That moment is your highest-leverage product problem.

And most teams make a huge mistake here.

They try to fix five trust breaks at once.

They rewrite onboarding, add new templates, ship UI changes, improve prompts, change the model, and update the homepage copy all in parallel.

That usually creates motion, but not clarity.

The better approach is to isolate the single trust break that is doing the most damage and attack that one first.

Step 2: Define your Trust Break Statement

Write one sentence that captures the primary trust break in the product.

It should follow this structure:

When [user type] tries to [job to be done], they lose trust because [specific reason], which causes [behavioral consequence].

For example:

When first-time PM users try to generate a product requirements draft, they lose trust because the first output sounds polished but lacks real product judgment, which causes them to abandon before exploring revision workflows.

When growth teams try to use the agent for campaign analysis, they lose trust because the system does not clearly cite where insights came from, which causes them to treat the tool as brainstorming software rather than a real decision-making assistant.

When users submit open-ended prompts, they lose trust because the output quality varies too much across similar inputs, which causes them to feel the product is too fragile for repeated use.

This statement is powerful because it aligns the team around one real problem rather than ten loosely related symptoms.

Phase 3: Redesign the first experience around a trustworthy win

One of the most common problems in AI products is that the first meaningful user win appears too late.

The product may eventually be valuable, but the user has to fight through too much ambiguity, too many retries, or too much weak output before reaching that value.

That is fatal in AI.

The first-use experience must get the user to a trustworthy win quickly.

A trustworthy win has four properties:

The user understands why the output is useful.

The output is strong enough that it does not immediately trigger doubt.

The workflow feels guided rather than fragile.

The user can verify the value without doing excessive cleanup.

Step 3: Design a “Trustworthy First Win” flow

Take your most important new-user use case and rebuild the first-run experience around it.

Here is how:

A. Narrow the initial use case

Do not start with the broadest possible promise.

Start with the use case where the model performs most reliably and where the value can be understood quickly.

This often means resisting the temptation to lead with “Ask anything” or “Use AI for everything.”

Broadness feels impressive in marketing but often performs terribly in onboarding.

Instead, ask:

What is the narrowest, highest-confidence workflow we can guide users through first?

Which use case has the strongest ratio of value delivered to risk introduced?

Where can we produce a result that feels both useful and believable within minutes?

B. Add scaffolding

The blank prompt box is often the enemy of trust.

Users do not always know what good input looks like, and when they provide weak input, they blame the product for weak output.

That means the product should provide more structure upfront.

Examples of scaffolding include:

guided prompts

input templates

suggested workflows

examples of strong inputs

clearer constraints on what the system is optimized to do

pre-filled context fields

progress indicators that make the workflow feel intentional

Scaffolding is not about reducing flexibility forever.

It is about increasing the probability of a strong first outcome.

C. Improve output legibility

Sometimes the output itself is not terrible, but it is hard to trust because it is too unstructured, too verbose, too vague, or too hard to validate.

This is a product design problem.

Ask:

Can the output be broken into clearer sections?

Can sources, rationale, or confidence indicators be shown?

Can the result be transformed into a format that is easier to inspect?

Can the output make its assumptions more visible?

Can the user understand what to trust and what to edit?

Remember: users do not just need good answers.

They need answers they can assess.

D. Build lightweight verification into the experience

AI products lose trust when they ask users to do too much hidden verification work on their own.

Where possible, the product should help users validate the result.

This could mean:

surfacing sources

highlighting extracted evidence

showing what input context drove the answer

indicating low-confidence zones

enabling side-by-side comparison with source material

making edits and corrections fast

The goal is not to eliminate verification.

The goal is to make verification feel supported instead of burdensome.

Phase 4: Make reliability a recurring product review, not a one-time cleanup task

Many teams treat reliability improvements like technical debt.

They plan to “clean it up later” after growth improves or after the next launch.

That mindset is deadly in AI products because reliability is not a polish layer you add later.

It is the core of the user experience.

So the team needs a recurring operating mechanism that forces reliability to be evaluated continuously.

Step 4: Add a Reliability Review to the product development process

For every AI-powered feature, workflow, or major release, run a dedicated review with product, engineering, design, and if possible customer-facing teams.

The purpose of this review is not to ask whether the feature works in the happy path.

It is to ask whether the feature is trustworthy enough to deserve repeated use.

Use a simple set of review questions:

Output quality

What does a good output look like here?

What does a dangerous or misleading output look like?

How much variation is acceptable before the experience feels unreliable?

Failure modes

Where is this workflow most likely to break?

What kinds of user inputs produce weak outputs?

What edge cases are most likely to erode trust?

Recovery

If the output is weak, can the user recover quickly?

Does the system help the user improve the result?

Is failure visible, or does the product present weak output with false confidence?

User psychology

When this feature fails, how will the user interpret the failure?

Will they think “I used it wrong,” or “this product is unreliable”?

Are we asking the user to carry too much uncertainty?

Launch readiness

Is the product mature enough for increased attention?

Are we overpromising relative to current system behavior?

Should we narrow the use case before scaling distribution?

The output of this review should be a simple artifact: a Reliability Readiness Memo that captures:

intended use case

trust-critical outputs

top failure modes

mitigations

recovery mechanisms

launch recommendation

This becomes incredibly valuable over time because it helps the team build institutional judgment around what makes an AI feature trustworthy.

Phase 5: Create trust metrics, not just funnel metrics

A major reason teams underinvest in trust is because they do not measure it directly.

They measure signups, activation, retention, conversion, and maybe NPS.

Those metrics matter, but they often hide the specific mechanics of trust collapse.

An AI product can have decent top-line numbers while still creating enormous hidden verification burden and low user confidence.

So teams need trust-adjacent metrics that help them see what traditional dashboards miss.

Step 5: Build a basic Trust Dashboard

You do not need a giant analytics initiative for this.

Start with a few focused signals:

First-output success rate

Of all new users who reach the first output, how many rate it as useful or continue meaningfully without immediate retry?

Retry burden

How many times does the average user have to retry before reaching a usable result?

Time to trustworthy value

How long does it take a new user to reach a result they are likely to believe?

Verification load

How much manual editing, checking, or source validation does the workflow require before the output becomes usable?

Recovery success rate

When the first result is weak, how often do users recover and continue versus abandon?

Reuse confidence

Do users come back to the same workflow again within a short period, suggesting the product earned enough trust to become part of behavior?

None of these metrics are perfect on their own.

But together, they tell a much richer story than “activation is down 7%.”

They help the team see whether the product is genuinely earning belief.

Phase 6: Align growth with product maturity so attention does not outpace trust

This is where the Icon story becomes especially relevant.

A lot of companies make a silent but devastating sequencing mistake.

They scale visibility before the product has earned resilience.

That means every marketing win creates more product damage.

The better the campaign performs, the more users encounter a fragile experience.

This creates a dangerous illusion.

The company thinks it has a growth problem.

In reality, it has a trust-conversion problem.

Step 6: Introduce a Distribution Readiness Check

Before any major launch, partnership, ad push, or PR moment, ask the team to answer these questions:

If 10,000 new users arrived this week, would the first-use experience earn trust fast enough?

Are we confident the product delivers a believable result within the first session?

What failure mode would become most visible if volume doubled tomorrow?

Are we about to amplify a product strength, or expose a product weakness?

Is our messaging narrower and more honest than our current capabilities, or broader and more aspirational?

If you cannot answer those questions clearly, the team probably should not scale distribution yet.

Or at minimum, it should narrow the promise.

What a strong 30-day output should look like

By the end of this 30-day sprint, the team should have produced tangible artifacts, not just better conversations.

At minimum, they should have:

a First-Use Trust Audit

a clearly written Trust Break Statement

a redesigned first-run trustworthy win flow

a Reliability Review template

a small Trust Dashboard

a Distribution Readiness Check for future campaigns

These artifacts matter because they turn “trust” from a vague strategic aspiration into an operating discipline.

And that is what most AI teams still do not have.

The deeper principle product builders should remember

The biggest mistake product teams make in AI is assuming trust is the natural byproduct of good branding, good models, or good intentions.

It is not. Trust is designed. Trust is sequenced.

And in the current AI market, trust is not a soft concept sitting on the edge of product strategy.

It is the product strategy.

If you want to build AI products that people actually trust

This is exactly why we created the AI Product Management Certification.

Inside the program, we teach product builders how to design and ship AI products that go far beyond simple demos or AI feature integrations.

You will learn how to design reliable AI workflows, build AI prototypes and agents, understand model behavior in production environments, and create evaluation systems that reduce hallucinations and improve reliability.

The program is taught by leaders building frontier AI products today, including Rohan Varma, the first PM at Cursor (the fastest company ever to reach $100M ARR, and Henry Shi, former Super.com cofounder and now part of the technical staff at Anthropic Labs.

If you want to future-proof your career as a product leader in the AI era, these are the exact skills companies are now hiring for.

»»»»» Click here to explore the full program + enroll for $500 off here.